PHD Experimental Results

|

PHD Experimental Results |

|

We have tested and evaluated PHD in a wireless test-bed consisting of several Windows and Linux client laptops equipped with IEEE 802.11b Cisco Aironet 350 Wi-Fi cards and Mopogo BT dongles. PHD is implemented in Java, by exploiting the portable Java AHP (JAHP) and jFuzzyLogic libraries.

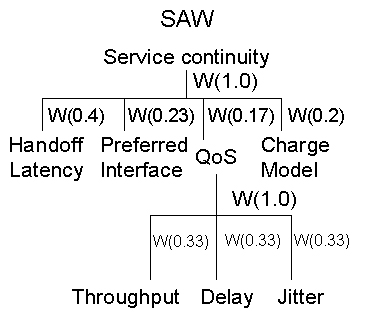

Adopted MADM methods two different MADM methods for wireless infrastruc-ture ranking, i.e., Analytical Hierarchy Process – AHP – and Simple Additive Weighting – SAW. In particular, for the evaluation we adopted the following AHP hierarchy and SAW weights/scores. | ||||||||||||||||||||||

|

||||||||||||||||||||||

AHP Hierarchy |

||||||||||||||||||||||

|

||||||||||||||||||||||

SAW scores

|

||||||||||||||||||||||

|

||||||||||||||||||||||

WIC Performance By adopting above models we evaluated and compared the performance of the WIC module under different executioni scenarios. To this aim, we focused on network interface user preference that is the objective with the highest priority/weight after handoff latency (see Figure 4). In run1, preferred network interface order is UMTS, WiFi, BT; in run2, it is changed to UMTS, BT, WiFi (other decision parameters are unchanged). As results shown in the table below demonstrate, AHP due to its major flexibility and automatic weighting ability is able to rank network infrastructures according to same order imposed by user preferences. On the contrary, SAW, due to its simplicity is not able to automatically com-pensate objective weights (e.g., UMTS score is the same); as a consequence, in run2 SAW ranking is less accurate and does not reflect the preferred network interface order. Obtained results are shown in the following table. |

||||||||||||||||||||||

|

||||||||||||||||||||||

Accuracy of wireless infrastructure scores evaluated by WIC using SAW and AHP |

||||||||||||||||||||||

Time required for vertical handoff completion We evaluated the average time required for vertical handoff completion in four different situations: from BT to Wi-Fi and vice versa, by employing AHP and SAW.

As shown in the figure, vertical handoff management time can be divided into wireless infrastructure decision time (TWIC), adaptation action decision time (TAAC), and adaptation action execution times (TEXEC). TWIC only depends on the adopted MADM technique. With AHP TWIC is longer, i.e., about 200ms, while it is about 80ms in the SAW case. This is mainly due to different algorithm complexities, exponential for AHP, polynomial for SAW. TACC, instead, is almost invariant and only slightly depends on handoff direction, i.e., 220ms for BT-to-Wi-Fi and 161ms for Wi-Fi-to-BT. This difference is due to the fact that most IPR have been designed for portable devices with limited profiles: hence, the similarity evaluation phase requires more time when the old IPR profile quality is more limited, such as for BT. In any case, let us note that static adaptation calculation and ranking guarantees low dependency of TACC on vertical handoff direction.

|

||||||||||||||||||||||

|

||||||||||||||||||||||

Other experimental results will be available soon!!

|

||||||||||||||||||||||

| 11-mar-09 |